Long-haul jet engines used to come off the wing every 5,000 hours. Today they run 40,000. Haul truck engines went from 10,000 hours between rebuilds to 30,000. Two decades of disciplined asset management and reliability engineering delivered that.

Those gains need defending. Traditional asset management has to continue, or the hard-won performance walks backward. But each improvement cycle delivers less than the last. Good operators will tell you reliability on its own is not worth much. OEE is what they can plan on. At least in theory. And OEE is the number everyone games.

The next uplift will come from treating maintenance excellence as an operating system that aligns maintenance, operations, supply, and planning around the same constraint and targets. Simple problems still respond to experienced eyes on the ground hunting the handoffs between teams. Complex ones need simulation, digital twins, and AI‑supported integrity testing. Both matter. Neither replaces the other.

The pattern we see

Maintenance teams are working hard and hitting plan compliance. WO backlogs are real but manageable and OEE dashboards are green. Yet production still misses its targets, shutdowns overrun, and the same unscheduled losses show up quarter after quarter. Both sides point fingers.

The gap is rarely inside any one function. It lives in the handoffs between them.

Parts are not on site when maintenance needs them. Shutdown scopes get reopened a week before execution. Operations push equipment past service intervals to hit a daily target and then wear the unscheduled failures next month. Each team optimises for its own goals, and the system pays the price.

I have seen this pattern across asset-intensive industries over 30 years. It is not a maintenance problem. It is a system problem.

Figure 1 Container & Roll on Roll off ferry, Melbourne

What it costs

A gold mine in PNG had been acquired from a global major, but production was disappointing. The mine produced ore on only 289 days a year. Benchmarking against over 100 similar assets suggested 330 to 340 was achievable. Unscheduled losses accounted for 58 days. Scheduled maintenance at just 18 days was well below benchmarks. The operation was under‑maintaining and paying for it in breakdowns.

Most of the unscheduled loss occurred right before and immediately after major shuts. The team implemented a shuts readiness program tracking over 100 critical data points to hit 100% completion on time. Maintenance crews walked dress rehearsals on site before execution. At the same mine, the mill shutdown always ran long and blew the production plan. One option the team suggested was a simple change to how bolts and liners were installed that took hours out of the task.

The real breakthrough sat underneath. The liners were designed for nine months. The risers had an MTBF of six months. The risers were driving shutdown frequency, not the liners. Once the team reset shutdown frequency off actual failure data, scheduled maintenance days increased from 18 to 24 and production days rose by roughly 12% to 325 over two years.

Figure 2 Crew discussing through the wall bolting, PnG

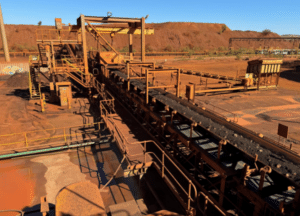

A large iron ore mine had missed production targets for over a decade by about 4%. The third leading cause was “waiting for parts,” roughly 25% of the shortfall. We started at the warehouse. The first KPI was to achieve same‑day receipt, improving from an average of three days. Parts with an identified source of supply went from around 50% to over 95%, reducing ordering time delays and wrong parts being delivered. Criticality‑based stocking was overhauled for production‑critical and long‑lead items. Within six months the warehouse was hitting targets, and that 25% production delay “waiting for parts” dropped out of the top ten losses.

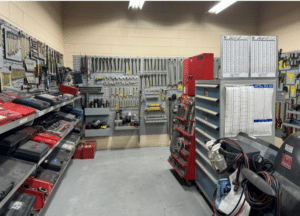

Sometimes the answer is simpler than anyone expects. A Canadian aluminium smelter was asked to take on 20% more mobile equipment maintenance from sister operations without adding labour or expanding the facility. The new GM and I walked the site and agreed that by doing 5S really well they had a chance. Throughput increased by 25%, rework dropped by about 5%. No capital. No additional headcount. Just discipline.

These are not unusual stories. In my experience, every heavy industrial operation has upside in the interactions between maintenance, shutdowns, inventory, and operations. The solutions are often straightforward. The hard part is seeing them, and that requires time on the ground.

Figure 3 Tool crib after 5S, Canada

Why “better maintenance” is not enough

Figure 4 Crew solving operational constraints with data, Pilbara

At a major LNG facility running five trains plus wells and shipping, a single train produces around 3.5 million dollars of gas a day. On one routine inspection, an operator identified a flange bolt that needed replacing. The maintenance team found a box of the right size on site. Good news. The bad news was the material data sheet was missing, and the plant manager rightly decided not to risk using unverified bolts on an LNG train. New bolts had to be ordered and hot‑shotted to site. Three days. Over 10 million dollars in lost revenue from one missing piece of paperwork on a bolt.

That is what asset management looks like when you trace the full cost. Not the price of the part. The price of the production you lose while waiting for it.

Maintenance excellence is not a maintenance department initiative. It is an operating system. Asset management, work management, parts, people, and information all need to work together. When they work in isolation, you get local wins and system losses. The best shutdown plan fails when work orders are added after the cutoff. A missing data sheet on a box of bolts can cost more than the maintenance budget for the month.

Figure 5 Rodding furnace, Australia

At a joint Suzuki and GM fabrication plant in Ontario, two identical production lines sat side by side. One built the Suzuki Vitara, the other the GM Tracker. Same vehicle. Same plant. Same workforce.

In practice, vastly different. One production engineer told me she would flat out refuse to drive the GM version on the test track, while she happily would own a Vitara. The difference was the engineering change order system. Suzuki processed changes instantly and electronically. GM used batch processing on a quarterly cycle. That gap flowed through to inventory and to maintenance of the production machines. The Suzuki side used Teian, operator‑driven continuous improvement, feeding operator knowledge directly into how equipment was maintained. The GM side relied on OEM install standards.

Same plant. Same people. Dramatically different outcomes. How information flows, how changes are processed, and how operator knowledge is captured drives maintenance quality as much as any maintenance strategy.

At a submarine operation, the refit team was consistently outperforming the original build. The refit crews had years of hands‑on experience with the equipment and were applying it every day. But that experience was not being shared back to the new build team. Two teams, same platform, different results. When knowledge stays in one part of the system, the whole system underperforms.

Solve for the system outcome. Align maintenance, operations, supply, and planning around the same targets. Make sure information flows to where it is needed. The prize is not a better maintenance department. It is a more reliable, safer, higher‑performing operation.

Matching the strategy to the asset

The maintenance strategy itself matters. We all use run‑to‑failure, scheduled preventive, condition‑based, predictive, and prescriptive maintenance. The winning condition is to match the strategy to the criticality of the asset. Run‑to‑failure is right for some items. Predictive is essential for the assets that drive your production constraint.

Practitioners leverage valuable methods such as RAM, FMECA, RCM, and FRACAS. Our article, Constraint to Control, showed that performance models like Lean, Theory of Constraints, and flow analysis cannot solve complex system problems on their own. The skill is the same here. Bring asset management and maintenance methods together into one integrated system view so decisions improve the operation not just help a function hit its KPIs.

For straightforward operations, walking the floor with experienced hands and hunting handoffs for unscheduled loss gets you there. For complex operations where multiple assets interact and the consequences of failure are severe, you need these frameworks feeding into coordinated simulation with clean failure data.

Figure 6 Iron ore conveyors, Pilbara

On conveyor inspections, experienced operators walking the line could spot faults, but they were exposed to moving machinery, noise, and weather, and false positives were common. Introducing drones, with experienced operators reviewing footage and training the analysis, cut false positives by more than half, lifted actual fault detection by roughly 5%, and dropped the all‑injury frequency rate from 2.0 to 1.85 in a year, with crews off the line of fire.

Figure 7 CF-18 Hornets on the flight line, Canada

At the other end of complexity, while performing QA on CF‑18 off‑wing engine remanufacturing for the Canadian Air Force, we could not work out why MTBF was not hitting standards. The answer was a complex interaction between three things. The wind tunnel was out of compliance. Power test sensors had too much variance from centre line, so the expected power curve was not actually being tested. Some turbine blade machining tools were using an older specification. It took systematic investigation across the full rebuild and test process to find it.

Get the manual process right first. Lock in good logic.

Where to start

Three entry points, depending on what the operation needs.

If unscheduled loss is the dominant problem, start with root cause analysis and a review of your maintenance strategies. Match the strategy to the asset. Challenge whether you are over‑servicing low‑risk equipment while under‑investing in the assets that drive your constraint. Start on the ground and let the evidence lead.

If shutdowns are bleeding time and cost, start with shuts readiness. Track critical data points, run dress rehearsals, enforce scope cutoffs. Walk the shutdown tasks before you plan them. Check your component‑level MTBF data. The PNG risers were driving shutdown frequency, not the liners the plan was built around.

If “waiting for parts” shows up in your loss data, start with how parts get to the people who need them. Trace the path from request to receipt. The gaps in cataloguing, sourcing, stocking, and data quality are where the improvements sit.

These three threads connect. Fixing one often reveals the next. The entry point matters less than starting.

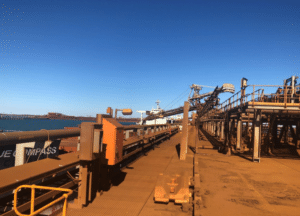

Figure 8 Iron ore ship loading, Pilbara

The system that keeps the system running

Sometimes the answer is 5S done properly. Sometimes it is a complex multi‑factor investigation like the CF‑18 engine rebuild. The common thread is getting on the ground and seeing the system for what it is.

In my experience, organisations that get this right treat maintenance excellence as an operating system, not a department initiative. They align maintenance, operations, supply, and planning around system outcomes. Information flows between teams, not just within them. The fundamentals come before the technology. And the floor gets walked. The hard‑won reliability gains are behind us.

The prize is significant. Maintenance costs down 10 to 20%. Production uplift of 2 to 5%. Fewer safety incidents. A team that runs on rhythm instead of reacting to the last crisis.

Figure 9 Celebrating a promotion (second from right), PnG

If your operation is facing persistent reliability losses, shutdown overruns, or production delays, it may be time to look beyond maintenance alone and examine the system around it. If you would like to discuss how to unlock performance across maintenance, operations, and supply, feel free to get in touch.